By

Henry Mao

—

GPT 3 and SEO: Why AI Will Revolutionize Your Content Forever

We've all heard the buzz by now.

OpenAI has released a new version of Generative Pre-trained Transformer version 3 (in short, GPT-3 or GPT 3) with beta API access GPT 3, much like its predecessor GPT 2, is a large deep neural network that can automatically generate text realistic enough to fool the average human. It is an advanced AI that learns how to imitate human writing from the web.

Here's an excerpt of an article generated by GPT 3:

In the years after the Second World War, the economy of the Western world, and especially the economies of Europe, started to recover and to show growth. The rebuilding of factories, of roads and bridges, of cities and houses, of harbors and airports had started. The economy of Europe had become an engine of growth for the whole Western world. Countries, companies and people prospered, and a great period of economic growth, rising wages, and improved living standards started in Europe. The combination of rebuilding and new investment made Europe a great place to do business. For companies, Europe was a vast market, with its members more or less unified in terms of market regulation, infrastructure, investment policy, and culture. Firms set up factories, invested, and found new markets, and these investments were largely debt-funded.

These results have undoubtedly surprised the public and generated a lot of hype. The generated text reads well and is grammatically correct - but GPT 3 is not just a powerful text generator. The technology marks a fundamental way we have to think about content creation, marketing, and SEO (Search Engine Optimization). Short snippets of content, like the one shown above, can be easily created for a low cost.

As SEO experts and content creators, it is imperative to understand GPT 3. Does this mean human writing is obsolete? Can it produce a high-quality copy? Does this mark a doomsday scenario where SEO-spam bots churn out unlimited garbage?

While there are some truths to these sentiments, we think the overhype surrounding GPT 3 needs more clarity. To understand the impact of text generation technologies on SEO and content writing, we need to first break down what GPT 3 does, why it matters, and how it works.

The Generality of GPT-3

GPT-3 and its predecessor technologies (GPT and GPT 2) are a line of research on general NLP (Natural Language Processing) models developed by OpenAI. But what does it mean to be general?

Machine learning has a long history of developing systems that are good at just one thing. These systems are called narrow AI. If you want an AI that predicts the rating of an Amazon review - you can easily train one if you have enough training data. If you want to develop a model that can look at a profile picture on social media and tell you who it is - you can train another model that will do the job.

The problem is that AI systems trained on either one of these tasks are unable to work on anything else - hence the term narrow. It is constrained to the scope it is trained on. The current holy grail of AI research is to seek more general technologies - AIs that can do many things. Here's why general technologies are game-changers.

Why build AI generalists?

A common sentiment goes - shouldn't specialized experts be preferred?

Back in the early days of computing, people created specialized computers that can only calculate and solve one type of problem. Imagine having a specialized calculator that can only do addition, but nothing else. Sure, it is really good at addition and can do it very fast, but that wouldn't be too useful.

Instead, it is much more useful to have a computer that can add, subtract, go online, play video games, etc. Modern computers based on the von Neumann architecture have these general capabilities. In hindsight, it is easy to say that general-purpose computing is one of humanity's most impactful inventions.

The same principle applies to AI technologies like GPT 3. We want to have generality in our systems because this enables us to solve many more problems without hand engineering various tasks at hand. Plus, it turns out that general learning approaches have been shown to increase AI accuracy on NLP tasks by at least 60%.

After all, human beings are a form of general intelligence. General intelligence enables us to acquire skills we don't even know are useful beforehand. For those interested in what it means to have general intelligence, we recommend Chollet's paper On the Measure of Intelligence.

For SEO marketing, this means we don't need to know in advance what type of content we want to produce. We don't need to create a different AI for a slightly different purpose.

GPT-3 is an AI system that exhibits some properties of general intelligence (sometimes called Proto-AGI). For example, we can prompt the AI with examples of character dialogues and ask it to complete it:

Rex is a time traveller from the future. Ada is a nineteenth-century noblewoman. Rex: I think I crashed my time machine in your garden. Ada: Pardon me? What did you say young man?

It can also perform a variety of other tasks and even generate HTML code. This is a big deal because it means we can solve many content-related tasks with GPT.

So does this mean GPT 3 can solve all relevant tasks related to SEO? Can it create blog posts for any topic or content for any category we desire? Not quite. To answer that question, we need to break down how GPT 3 works.

How GPT 3 Learns

Leveraging Big Data

Machine learning models (and especially deep neural networks) are data-hungry and only work well when you supply it with a lot of data. After all, data is the new oil.

But getting data is hard and costly. Most useful machine learning systems require humans to laboriously label each and every data point. Labeled data is usually the primary bottleneck in many applications because it is expensive to gather - imagine the cost of hiring a fleet of Amazon Turkers!

GPT 3 works around this problem by creating its own training signal by modeling naturally occurring text on the web. It adopts a machine learning paradigm called unsupervised (or self-supervised) learning. This allows learning without human-labeled data. For those who want to delve into the technical details of unsupervised learning, our CTO has written an in-depth analysis here.

But even without labels, we need a lot of data right?

Turns out, the data is right under our nose. The internet contains a ton of high-quality, well-written articles about a variety of topics - and they're all easily accessible. The beauty of GPT's training technique is that it simply needs to learn how to predict these human-written articles to perform well.

But wait - aren't there a lot of garbage out on the web? Wouldn't GPT 3 learn those as well?

That is true. The creators of GPT mitigated some of these issues by using crowdsourcing to curate its data. One way to do this is to look at the URLs people share on Reddit, and only crawl content and posts from websites with a large number of Reddit upvotes.

Learning by Language Generation

Once you have the data, you can now train GPT. But how can you train GPT to get all these general capabilities that we desire? One idea is simply to do text generation. GPT learns to generate natural language by predicting the next word in an article from the previous words.

That is the main reason why GPT only generates content from left to right (it cannot do so backward). This type of learning is called language modeling.

It is as simple as that.

By predicting what word comes next in a sentence, the AI must learn how to make use of other words in its context. This implicitly forces GPT to learn many other important general knowledge.

What I cannot create, I do not understand.

-- Richard Feynman

In order to correctly predict the next word, you must also have some common sense understanding of our world in addition to basic things such as English syntax and grammar. That's how simply doing article prediction enables GPT to learn astonishing human-like behaviors.

Language generation systems have a long history in machine learning, and GPT is not new to the game. In fact, some AI researchers consider GPT as less of a scientifically novel achievement but rather an impressive engineering feat. It teaches us an important lesson about what $4+ million USD spent on computing resources combined with a large amount of data can and cannot get us.

So what's the verdict?

OpenAI showed us that scaling AI solutions can get us quite far. GPT, when scaled to its largest size, can extract a lot of general capabilities simply by observing how humans write. This is why you see such impressive performance from the model. Google has recently scaled a version of GPT called Switch Transformers to 10x of GPT-3's size.

It is the bitter lesson realized by many AI researchers that solutions led by computation and learning trumps manual human effort. By scaling a simple generation framework, we get GPT 3 that writes almost like a human.

But GPT 3 doesn't come without its limitations. As SEO and content marketers, knowing these limitations is highly important and influences how we can leverage this natural language technology.

Limitations of Text Generation

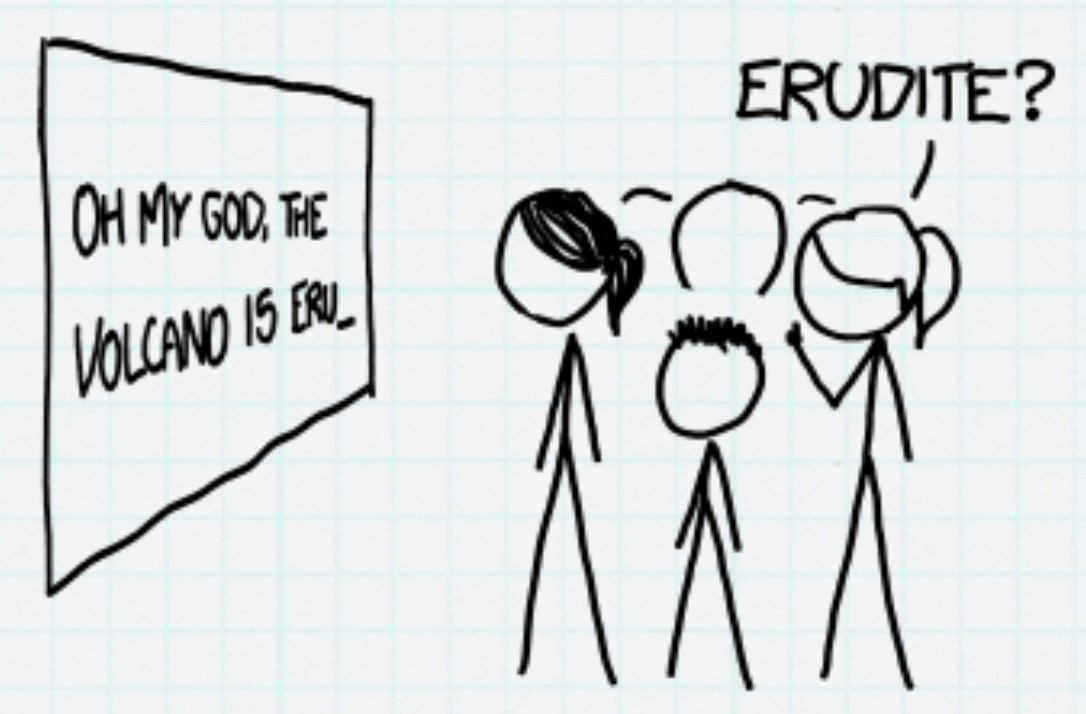

Poor World Model and Factual Correctness

Despite the hype, GPT does not have a good understanding of our world. One interesting way to see this lack of world model is if you prompt GPT with anything to do with common sense physics or the real world. As mentioned in OpenAI's technical paper, it has difficulty answering questions like "If I put cheese into the fridge, will it melt?". It also clearly cannot understand other human concepts like puns.

One possible reason for this phenomenon is that the AI is not an embodied cognition - it has never really seen or felt a fridge before, despite having read about it many times through the training data. If you blindly use AI to generate text for your content marketing needs, you will get some inconsistencies and factually incorrect items.

Unwanted Bias

GPT is trained on the web and, therefore, suffers from the same bias that internet data provides. Thus, using GPT directly may lead to inappropriate or offensive content being created. Some ways to mitigate this would involve offensive filters that reject inappropriate content. Reducing unwanted bias in machine learning is still an active area of research.

Domain Adaptation

Although GPT has learned a general understanding of language, it may not be appropriate for your domain. Recent research showed that tuning and tweaking GPT-like models can lead to even better results.

GPT works with only a few examples, but supplying it with a larger amount of data will definitely yield better results. Another limitation of GPT is its maximum generation length, which could make it not suitable for using long documents as input.

Practical Efficiency

While it is still too early to tell, it seems like OpenAI plans to charge a premium price to use GPT. This solution may be expensive for some use cases and the provided service is not tailored for SEO. Using or training GPT in-house is a practical challenge due to its enormous parameter size.

This issue is a lesser concern in the long run. There are some research directions that will enable more efficient ways to run GPT which will lower the long term cost.

The GPT-3 SEO Opportunity

So GPT-3 is a powerful text generation system - but what does this all mean for content marketing? Content marketing for SEO consists of many steps. It ranges from keyword research, competitor analysis, and finally, creating your content.

We see GPT mainly used to create content, but it cannot do it in isolation. Due to the limitations of the technology, it is obvious that letting the algorithm run free wouldn't yield great results. There has to be a human in the loop.

Writers Becoming Artists

GPT shines when it is best used as a tool in conjunction with human writers in the loop — how writers use AI tools without losing their voice is becoming a core skill for SEO teams. That's because human writers are great at several things that AI isn't. For example, human writers are better at high-level thinking and figuring out what to write. AI is great at low-level tasks like creating category pages from a list of web pages on a site.

A lot of effort in writing is spent on low-level problems like grammatical correctness, tone, and fluency. With GPT, a human writer's role transitions into an editor. Imagine painting broad brush strokes on a canvas, and the AI fills out the details of the image, then the human edits these details until it is perfect.

In a way, this is great because writers can focus on things that are more interesting - to build quality content ideas and focus on the more creative side of writing. This is better than making category pages, focusing on how many keywords are needed to stuff an article so it hits an optimal amount, and/or making sure each sentence is fluent.

Tools to Bridge Humans and AI

The corollary of the above is that we need great user experience and tools that leverage GPT so it can work well in conjunction with writers. Broadly speaking, there are several ways to realize GPT-like technology as useful content writing tools. Here are some example of AI technologies realized as various tools:

Readability Analysis

Having good readability is an important part of developing great content. It helps your users stay engaged and spend more time on your page, which is an important factor in ranking high on Google. But writing articles that are easy to read is easier said than done.

Here at Jenni, we've developed a tool that will do the job for you. We used technology similar to GPT 3, but adapted it for automatic sentence rewrites so that it becomes more readable.

Smart Rephrasing

Paraphrasing is the art of using a source text without directly quoting the source material. Anytime you are taking information from a source that is not your own, you need to specify where you got that information. That question often comes up with AI as well; our breakdown of AI writing, plagiarism, and originality covers what to watch for.

The paragraph above was paraphrased from Purdue's definition using our automatic rephrasing AI. An AI that performs smart rephrase can rewrite any sentence in a way that is different from the source or rephrase it in different desired writing styles.

At Jenni, we've done studies on our writers and found that automating rephrasing can save at least 30% of a writer's time. It also allows writers to experiment with alternative phrasing of sentences, some of which may flow smoother than the original writing or convey the intent better.

Topic Optimization

Many SEO experts rely on topic optimization as a way to ensure their content ranks high on search engines. Indeed, developing a set of topics is important in order to be relevant to certain search queries, but making sure an article satisfies all topic requirements is challenging.

Our editors used to spend 1-4 hours manually optimizing topics. Using AI systems to detect topic relevance in your article can help you keep your writing on track, which will save editors from having to rewrite irrelevant content.

Summarization

As we discussed earlier, AI is excellent at low-level tasks and summarization is no exception. When it comes to content writing, we found that a common task writers perform is summarizing other text.

Summarization is a task AI systems have proven to perform well in production and commercial systems. Rather than read through a dense block of text, why not have an AI give you a succinct bullet point list? In a similar spirit, you can use AI to create indexes or category pages if you have already built out your website.

Can Generated Content Rank?

Some SEO practitioners have become concerned about using automated content generation and receiving penalties from Google.

Google, like many search engines, wants to deliver the most relevant content to its users. So the main issue with generated content isn't the fact that it's generated, but rather the intent is usually to create spam. Google has claimed that, so long as content adds real value to the user and isn't used to game the system, generated content is fine.

In fact, many big news and media outlets like Forbes already use content generation technologies to help them. The key here is to fuse the best of both worlds - human and artificial intelligence - to create compelling content. Contributing valuable knowledge to the internet will ensure that you can rank at the top even if some of your content is generated.

The Future of AI and SEO

The line between science and fiction continues to blur with the release of cutting-edge AI models like GPT. The vast improvement in quality between GPT 2 and GPT 3 in only a year's time is astounding. As time passes, the newspaper you read before breakfast is more likely to be written by someone or something that has never had an omelet in their life.

That's why we believe it's important to grasp a deeper understanding of AI technology beyond just the hype. Those who are not in the SEO field may just be impressed by the progress of AI. Those who are in the SEO field and create content will need to adapt to these tools in order to stay at the top.