Durch

Justin Wong

—

How to Use AI for Peer Review: Simulate Reviewer Feedback and Strengthen Your Paper Before Submission

More than 50% of researchers now use AI while peer reviewing manuscripts, according to a 2026 survey of over 1,600 academics across 111 countries. Most are experimenting with general-purpose tools, without a structured workflow, and without knowing which parts of the process AI actually improves.

This guide covers exactly how AI peer review tools work, what they can realistically do, where they fall short, and how to use Jenni's Peer Review feature to simulate structured reviewer feedback on your own manuscript before it reaches a journal.

Watch: How Jenni's AI peer review feature works for researchers

<CTA title="Try Reviews on Your Next Manuscript" description="Simulate peer reviewer feedback, catch unsupported claims, and submit with confidence. Free to start." buttonLabel="Try Jenni Free" link="https://app.jenni.ai/register" />

What AI Can (and Cannot) Do in Peer Review

Before choosing a tool, it helps to be precise about the limits. Researchers who over-rely on AI for peer review end up with different problems than the ones they started with. Researchers who dismiss it entirely miss a genuinely useful step in the submission pipeline.

AI Can Help With | AI Cannot Replace |

Identifying unsupported claims | Expert scientific judgment in your field |

Flagging missing or weak citations | Domain-specific evaluation of novelty |

Checking structural consistency | Assessing methodological validity |

Scoring manuscript soundness and presentation | Evaluating real-world significance of findings |

Generating questions a reviewer might raise | Making final accept or reject decisions |

Flagging tone and clarity issues | Reading the broader field context around your work |

The right mental model is this: AI is a preparation tool, not a judgment tool. A manuscript that arrives at peer review already vetted for citation gaps and unsupported claims gives the reviewer space to focus on the science, not the scaffolding.

<ProTip title="📌 Note:" description="AI peer review tools are most valuable when used on your own manuscript before submission. Using AI to generate a peer review report and submitting it as your expert opinion violates most journal ethics policies. Disclosure requirements vary by journal, so always check." />

Most journals now require disclosure of AI use in the review process. The ethical line is not complicated. AI as preparation is widely accepted. AI as a substitute for your own expert opinion is not.

Why Pre-Submission Review Matters More Than Most Researchers Think

Rejection from a top journal can cost you three to six months of turnaround time. At journals like Nature or Science, overall rejection rates exceed 90%. Even at mid-tier journals, a significant share of manuscripts return for major revision on issues that were present before submission: unsupported claims, citation gaps, and structural inconsistencies that a careful pre-check would have caught.

The table below maps the most common reasons manuscripts receive major revision requests, and shows which ones AI can realistically help with before you submit.

Common Reason for Major Revision | AI Pre-Check Helps? | What to Do |

Claims not supported by cited sources | Yes | Run Claim Confidence in Jenni before submission |

Citation gaps or misattributed sources | Yes | Jenni flags mismatches between claims and cited papers |

Discussion overstates findings | Partially | Structural feedback catches logical overreach in most cases |

Abstract does not match paper's actual findings | Yes | Presentation score and comments flag this directly |

Methods section not reproducible | Partially | Soundness score surfaces this; final call requires your judgment |

Fundamental methodological flaw | No | Requires domain expert review. AI cannot assess this. |

A meaningful share of revision requests are preventable. They are not deep scientific disagreements. They are fixable structural and citation problems that a systematic pre-check surfaces in minutes.

How AI Peer Review Tools Work

Most AI peer review tools work by scanning the manuscript text, cross-referencing internal claims against citations, and evaluating structural logic. Here is what each capability actually does.

Claim Verification

AI scans the manuscript for declarative statements and checks whether each one is supported by a cited source within the document. Unsupported claims are one of the most common causes of reviewer pushback, and they are also among the most preventable. Understanding what reviewers actually look for in claims and citations makes this check especially valuable before submission.

Citation Gap Detection

Beyond checking whether claims have citations, advanced tools verify whether the cited sources actually say what the author claims they say. Misattributed citations are subtler and more serious than a missing one. A reviewer who catches a misrepresented source will question the reliability of your entire reference list.

Structural Feedback

AI evaluates whether the manuscript follows the logical structure expected for its paper type. Is the Methods section reproducible? Does the Discussion overstate findings relative to Results? Does the abstract accurately reflect what the paper actually found? These are structural integrity checks that are genuinely hard to catch when you have been working on the same document for months.

Tone and Clarity Analysis

Flags overly complex sentences, inconsistent terminology, and ambiguous phrasing throughout the manuscript. This is particularly useful for researchers preparing manuscripts in their second language, where register and precision matter as much as the content itself.

<ProTip title="💡 Pro Tip:" description="Run tone and clarity analysis on your abstract first. Reviewers and editors form their first impression of your paper there. A clear, precise abstract sets the tone for how generously the rest of the paper gets read." />

How to Use Jenni's Peer Review Feature — Step by Step

Jenni's Peer Review feature simulates structured academic reviewer feedback, scoring your manuscript across three dimensions and returning specific, line-level comments. Here is exactly how to run it.

Step 1 — Open Your Manuscript in Jenni

Click Documents in the left sidebar and select the manuscript you want to analyze. If it is not already in your library, drag and drop the PDF to upload it first. Once open, the document is ready for analysis.

Step 2 — Open the Review Panel and Select Peer Review

Click Review in the top toolbar. The panel opens on the right and shows four available review types: Claim Confidence, Proofread, Peer Review, and Tone of Voice. Click Run review under the Peer Review card to start the analysis.

Step 3 — Wait for the Analysis to Complete

Jenni processes the full manuscript before returning results. This is not a grammar scan. It is reading the paper the way an academic reviewer would, evaluating argument structure, citation quality, and field contribution. The processing time reflects that depth.

<ProTip title="📝 Note:" description="Jenni Reviews is not a shortcut. Think of it as a thorough, fast colleague who has read your entire paper and flagged anything that could cause friction with a reviewer. You still make every decision." />

Step 4 — Read Your Overall Assessment

Once complete, Jenni returns a scored assessment across three dimensions: Soundness, Presentation, and Contribution, each rated out of 4. An overall score out of 10 appears below, alongside a Results paragraph that gives you the big-picture verdict in plain language. Read the Results paragraph before you look at the individual scores.

Step 5 — Work Through Weaknesses, Strengths, and Questions for the Authors

Below the overall scores, Jenni returns three structured lists that mirror how a real reviewer writes their formal report. Weaknesses (flagged with orange dashes) identify the manuscript's most significant gaps. Strengths (marked with green pluses) show what is working well, which matters just as much: these are the sections to leave intact during revision. Questions for the Authors (in blue) surface the specific points a reviewer would almost certainly raise.

Treat the Questions for the Authors list as a preview of your next reviewer's report. If you can answer every question in that list before resubmitting, you have already done the most important revision work.

<ProTip title="💡 Pro Tip:" description="The Questions for the Authors section is often the most actionable output. Each question maps directly to something a real reviewer would raise. Work through it like a checklist before you write a single word of your revision." />

Step 6 — Work Through the Inline Comments

Navigate to the Comments panel to see Jenni's line-level feedback. Comments are tagged by severity. Start with anything marked Major. Each comment identifies the specific issue and explains why it matters, in the same direct language a real reviewer would use. Do not accept every suggestion automatically. Your expertise decides what changes are warranted.

<ProTip title="💡 Pro Tip:" description="Run Jenni Reviews on both your first draft and your final version. It takes a few minutes and often catches something new introduced during editing, such as a paragraph that got restructured and lost its citation in the process." />

How to Read Your Jenni Peer Review Score

The three-dimensional scoring framework mirrors the criteria real reviewers apply when making publication recommendations. Knowing what each score range signals helps you prioritize where to spend your revision time rather than trying to fix everything at once.

Score Range | What It Signals | Priority Action |

4/4 on any dimension | Strong. No significant issues in this area. | Check Strengths list to confirm what to preserve. |

3/4 on any dimension | Minor gaps. Fixable before resubmission. | Work through Comments for this dimension specifically. |

2/4 on any dimension | Meaningful gaps that reviewers will flag. | Prioritize this area. Address Weaknesses list first. |

1/4 on any dimension | Significant issues. High risk of rejection. | Address this before submitting anywhere. |

Overall 8 to 10 out of 10 | Manuscript is in strong submission shape. | Targeted polish. Answer the Questions for the Authors. |

Overall 5 to 7 out of 10 | Solid foundation. Systematic revision needed. | Work through the full Comments panel by severity. |

Overall 1 to 4 out of 10 | Substantial revision required. | Thorough rework before submitting to any journal. |

A low Soundness score is the most urgent flag. Reviewers can overlook presentation issues but rarely forgive a manuscript where core claims are not supported by evidence. A low Contribution score often points to a framing problem rather than a research problem. That is typically faster to fix than it sounds.

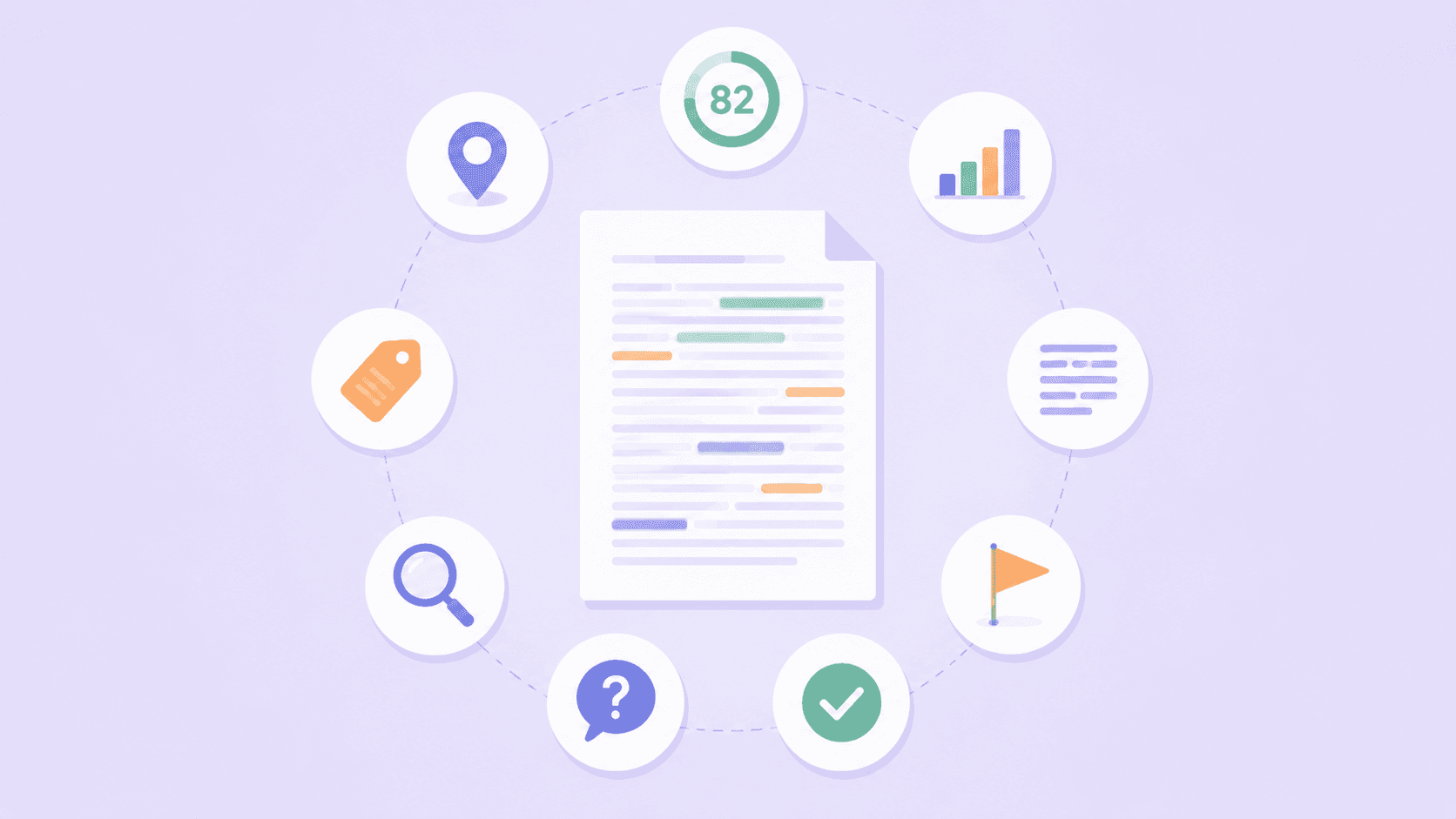

What Jenni's Peer Review Feature Returns

Each Peer Review run returns a full structured report you can act on immediately. Here is the complete output:

✅ Soundness score (out of 4) — methodology quality, evidence strength, and citation integrity across the manuscript

✅ Presentation score (out of 4) — writing clarity, structural logic, and whether the abstract accurately reflects the paper's findings

✅ Contribution score (out of 4) — originality, field relevance, and significance of the research question

✅ Overall composite score (out of 10) — a single benchmark to track improvement across drafts

✅ Results paragraph — plain-language big-picture verdict before you get into the specifics

✅ Weaknesses list — the gaps and problems a real reviewer would flag, written in direct reviewer language

✅ Strengths list — what is working well, so you know exactly what not to change during revision

✅ Questions for the Authors — the specific questions your manuscript currently leaves unanswered that a reviewer would raise

✅ Inline comments by severity — line-level feedback tagged as Major or Minor, each identifying the issue and explaining why it matters

Frequently Asked Questions

What is an AI peer review tool?

→ An AI peer review tool analyses your manuscript before submission, simulating the structured feedback a real reviewer would provide. It checks for unsupported claims, citation gaps, structural issues, and writing clarity. It does not replace expert peer review. Its value is helping you identify and fix fixable problems before a human reviewer sees the paper.

Can AI replace peer review?

→ No. AI peer review tools are preparation tools, not substitutes for expert judgment. They can flag unsupported claims, score manuscript soundness, and surface structural gaps. But assessing methodological validity, evaluating originality, and making publish or reject decisions require domain expertise that AI does not have. Most journals also prohibit AI-generated reviews submitted as human expert opinion.

What does Jenni's Peer Review feature check?

→ Jenni scores your manuscript across three dimensions: Soundness, Presentation, and Contribution, each rated out of 4, with an overall score out of 10. It also returns a Results paragraph, a Weaknesses list, a Strengths list, Questions for the Authors, and inline comments tagged by severity. Each comment identifies the specific issue and explains why it matters to reviewers.

How is Jenni different from other AI writing tools?

→ Most AI writing tools focus on grammar and style. Jenni's Peer Review feature simulates academic reviewer feedback specifically, scoring manuscripts on Soundness, Presentation, and Contribution. It also surfaces Weaknesses, Strengths, and Questions for the Authors, the same structured outputs a real reviewer would include. It is built for researchers, which changes both what it checks and how it explains its findings.

Review Your Own Work Like a Reviewer Would

The gap between a paper that gets major revisions and one that gets accepted often comes down to how well the author anticipated reviewer concerns. A structured AI pre-check gives you a practical way to simulate that process before submission, catching the fixable issues so reviewers can focus on the substantive questions rather than the structural ones.

<CTA title="Run Jenni Reviews on Your Manuscript Today" description="Upload your paper, run the Peer Review feature, and catch what human reviewers would flag before they do. Free to start." buttonLabel="Try Jenni Free" link="https://app.jenni.ai/register" />

Start with your claims. If every factual statement in your paper has a source, and every source actually says what you claim it says, you are already ahead of most submissions that land on a reviewer's desk. For the full picture on types of peer review, how to write a peer review report, or how to respond to peer review comments, explore the rest of our peer review series.